Australian health experts are warning that Meta is removing social media posts designed to educate the public about dangerous illicit drugs, raising concern that vital health information is being blocked at the very moment it is needed most. ABC reported on April 7 that public health workers say lives could be put at risk when educational alerts about harmful substances are taken down by automated moderation systems.

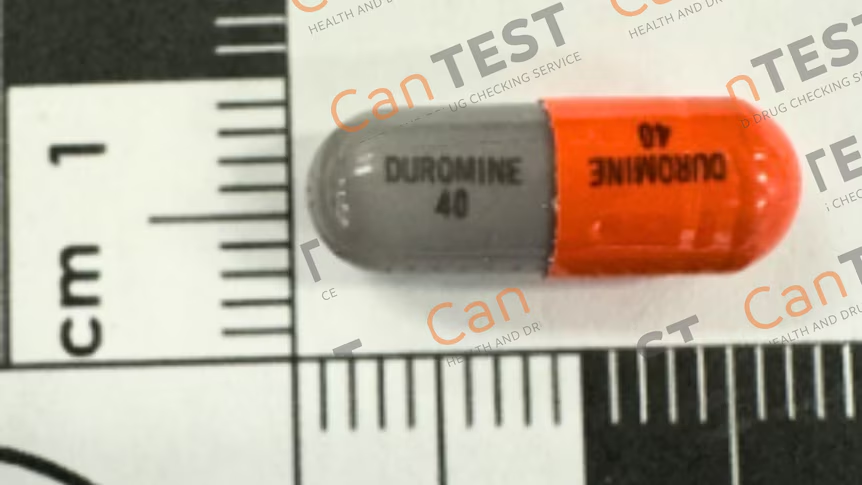

The concern centers on harm reduction groups that use platforms such as Facebook and Instagram to warn people about especially potent or contaminated drugs circulating in the community. According to ABC and the Canberra Times, posts from services including Pill Testing Australia and CanTEST were removed or restricted even though they were intended as public safety messages rather than promotion of drug use.

Health experts say the removals highlight a serious flaw in automated content moderation. They argue that social media systems may be treating drug education posts the same way they treat content that promotes illegal substances, making it harder for the public to access warnings about overdose risks and dangerous new compounds. That concern is supported by the reports, which describe educational alerts being censored despite their public health purpose.

The issue is especially sensitive because these warnings are often shared ahead of large festivals and events, where fast access to accurate information can matter. ABC reported that advocates are now calling on Meta to revise its moderation approach and want Australia’s eSafety Commissioner to step in.

The dispute adds to a broader debate over whether major platforms can reliably distinguish between harmful content and legitimate health communication. In this case, Australian experts say the current system risks silencing the very messages meant to keep people safe. This last point is an inference based on the reporting about removed alerts and calls for regulatory intervention.